Google Now may be your personal assistant. However your voice isn’t the only one it listens to. As a group of researchers from France have found, Siri & Google Now also helpfully obeys the orders of any hacker who talks to them—even, in some instances, one who’s silently transmitting instructions through radio from so far as sixteen feet away.

A couple of researchers at ANSSI, a French government agency dedicated to information security, have proven that they can use radio waves to silently set off voice instructions on any Android cellphone or iPhone that has Google Now or Siri enabled, if it additionally has a pair of headphones with a microphone plugged into its jack. This intelligent hack makes use of these headphones’ wire as an antenna, exploiting its wire to transform surreptitious electromagnetic waves into electrical signals that seem to the smartphone’s operating system to be audio coming from the user’s mic. With out speaking a word, a hacker may use that radio attack to ask Siri or Google Now to make calls and send messages, dial the hacker’s number to turn the cellphone into an eavesdropping gadget, send the smartphone’s browser to a malware website, or ship spam and phishing messages through e-mail, Facebook, G+ or Twitter etc.

The possibility of inducing parasitic signals on the audio front-end of voice-command-capable units might raise crucial security impacts,” the 2 French researchers, José Lopes Esteves and Chaouki Kasmi, write in a paper published by the IEEE. Or as Vincent Strubel, the director of their research group at ANSSI puts it more simply, “The sky is the limit here. Every thing you can do via the voice interface you can do remotely and discreetly via electromagnetic waves.”

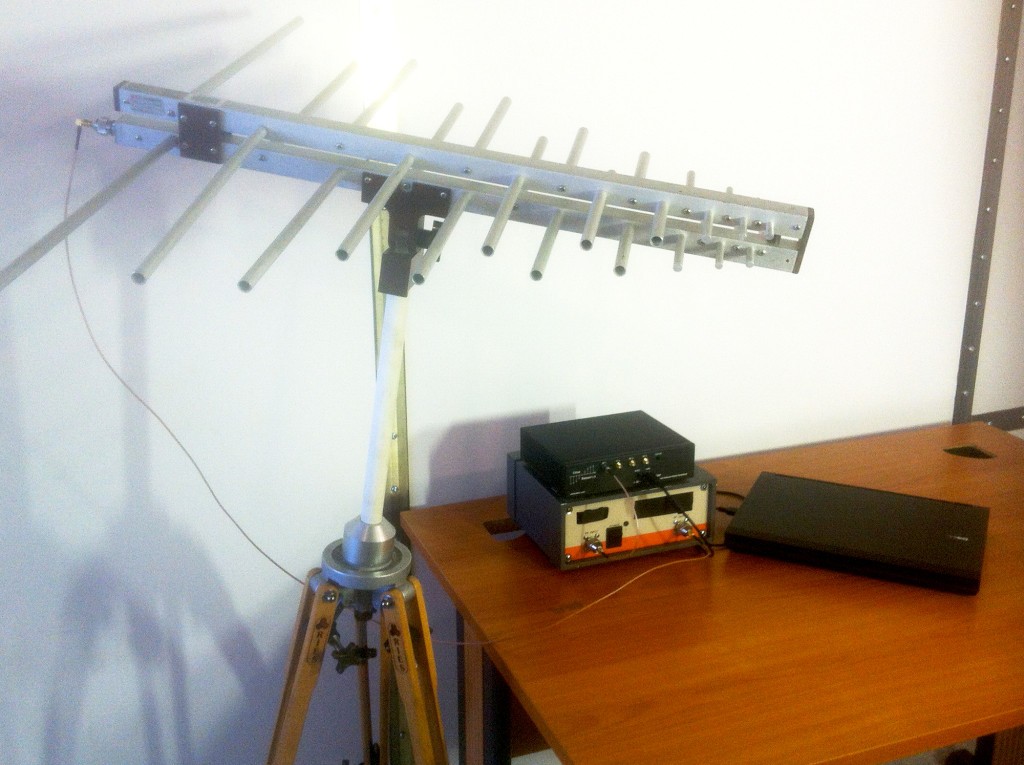

The researchers’ work, which was first presented at the ‘Hack in Paris’ convention over the summer but received little notice outside of some French web sites, makes use of a relatively easy collection of equipment: It generates its electromagnetic waves with a laptop operating the open-source software GNU Radio, a USRP software-defined radio, an antenna, and an amplifier. In its smallest form, which the researchers say may fit inside a backpack, their setup has a range of around 6.5ft. In a more powerful form that requires bigger batteries and could only practically fit inside a car or a van, the researchers say they may extend the attack’s range to more than 16ft.

Here’s a video exhibiting the attack in action: In the demo, the researchers commandeer Google Now through radio on an Android smartphone and force the smartphone’s browser to visit the ANSSI site. (That experiment was carried out inside a radio-wave-blocking Faraday cage, the researchers say, to abide by French laws that forbid broadcasting certain electromagnetic frequencies. However Kasmi and Esteves say that the Faraday cage wasn’t essential for the hack to work.)

The researchers’ silent voice command hack has some critical limitations: It only works on smartphones which have microphone-enabled headphones or handsfree plugged into them. Many Android phones don’t have Google Now enabled from their lockscreen, or have it set to only respond to instructions when it recognizes the user’s voice. (On iPhones, nevertheless, Siri is enabled from the lockscreen by default, with no such voice identity feature.) Another limitation is that attentive victims would likely be able to see that the cellphone was receiving mysterious voice commands and cancel them before their attack was complete.

Then again, the researchers contend that a hacker could disguise the radio gadget inside a backpack in a crowded area and use it to transmit voice instructions to all the surrounding smartphones, many of which might be vulnerable and hidden in victims’ pockets or purses. “You can imagine a bar or an airport where there are many people,” says Strubel. “Sending out some electromagnetic waves might trigger a lot of phones to call a paid number and generate money.”

Although the latest version of iOS now has a hands-free feature that permits iPhone owners to send voice commands merely by saying “Hey Siri,” Kasmi and Esteves say that their attack works on older versions of the operating system as well. iPhone headphones have long had a button on their wire that enables the person to enable Siri with a long press. By reverse engineering and spoofing the electrical signal of that button press, their radio attack can set off Siri from the lockscreen with no interaction from the user. “It is not necessary to have an always-on voice interface,” says Kasmi. “It does not make the smartphone more vulnerable, it simply makes the attack less complex.”

Of course, security conscious phone users probably already know that leaving Siri or Google Now enabled on their smartphone’s login screen represents a security risk. At least in Apple’s case, anybody who gets hands-on access to the device has long been able to use these voice command features to squeeze sensitive info out of the smartphone—from contacts to recent calls—and even hijack social media accounts. However the radio attack extends the range and stealth of that intrusion, making it all the more important for customers to disable voice command functions from their lock screen.

The ANSSI researchers say they’ve contacted Apple and Google about their work and recommended different fixes, too: They advise that better shielding on headphone cords would force attackers to use a better-power radio signal, for example, or an electromagnetic sensor within the smartphone might block the attack. However they note that their attack may be prevented in software, too, by letting customers create their very own custom “wake” phrases that launch Siri or Google Now, or by utilizing voice recognition to block out strangers’ commands.

With out the security measures Esteves and Kasmi suggest, any phone’s voice features may represent a security liability—whether from an attacker with the smartphone in hand or one that’s hidden in the next room. “To use a smartphone’s keyboard you have to enter a PIN code. But the voice interface is listening all the time with no authentication required,” says Strubel. “That’s the primary issue here and the goal of this paper: to point out these failings within the security model.”

[…] Source: Siri & Google Now Can Be Hacked From 16 Feet Away […]

[…] Source: Siri & Google Now Can Be Hacked From 16 Feet Away […]

[…] Source: Siri & Google Now Can Be Hacked From 16 Feet Away […]